1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

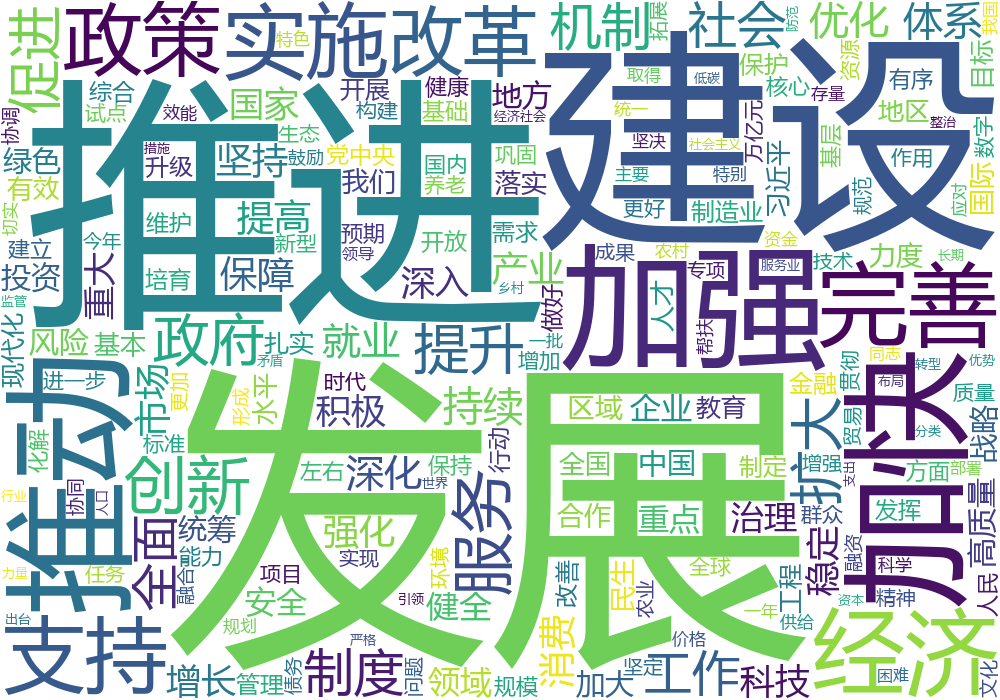

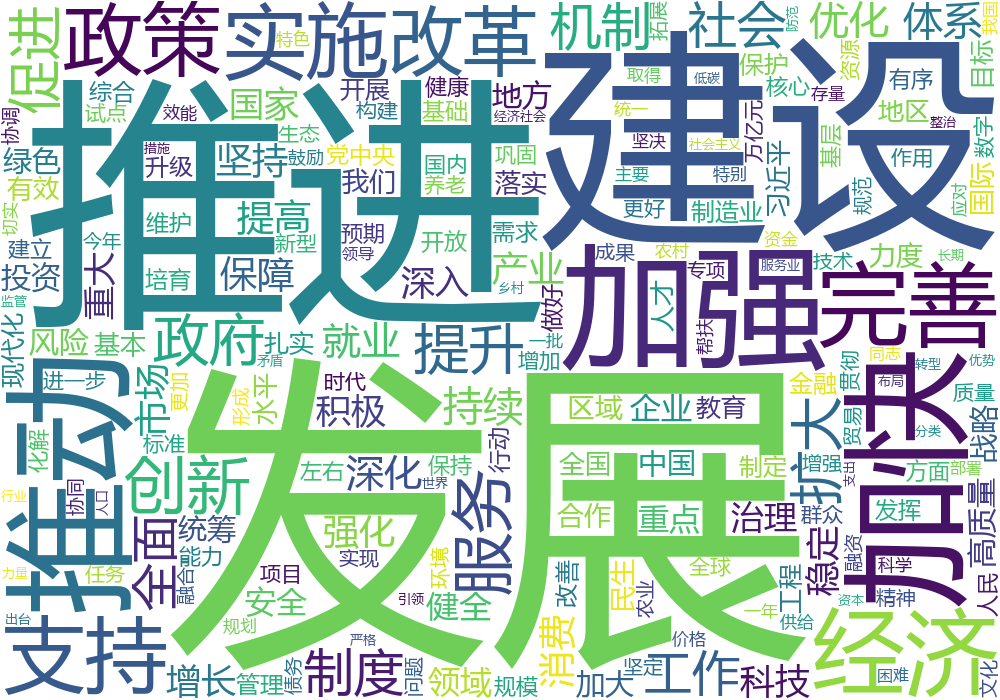

| import jieba

from wordcloud import WordCloud

from collections import Counter

import matplotlib.pyplot as plt

with open("2025政府工作报告.txt", "r", encoding="utf-8") as f:

t = f.read()

ls = jieba.lcut(t)

filtered_words = [word for word in ls if len(word) > 1]

word_counts = Counter(filtered_words)

w = WordCloud( \

width = 1000, height = 700,\

background_color = "white",

font_path = "msyh.ttc"

)

w.generate_from_frequencies(word_counts)

plt.figure(figsize=(10, 7))

plt.imshow(w, interpolation='bilinear')

plt.axis('off')

plt.show()

w.to_file("grwordcloude_filtered.png")

|